Introduction: More Than Just a Chat

Many junior data scientists fall into the trap of thinking that prompt design is just "talking" to a bot. In reality, Prompt Design is the engineering process of creating natural language requests that elicit accurate, high-quality, and reproducible responses.

Think of an LLM as a highly capable but literal-minded intern. If your instructions are vague, the results will be mediocre. To get the most out of models like Gemini, you must provide clear and specific instructions. This can range from a simple question to a complex mapping of a user’s mindset.

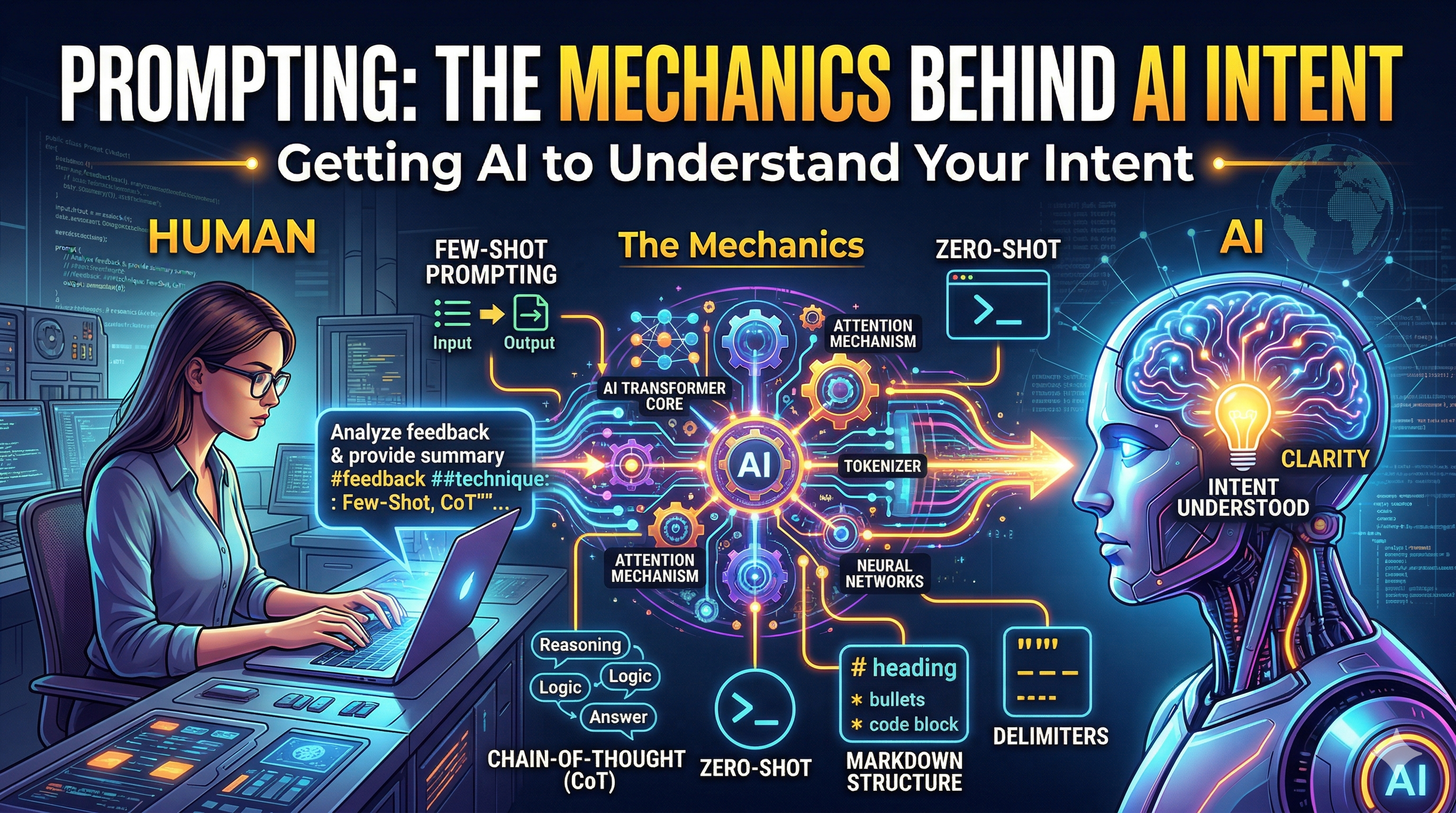

The Core Mechanics: Why Structure Matters

Before we dive into techniques, we must understand why certain prompts work better than others. Models like Gemini use an Attention Mechanism. This means the model "weighs" the importance of different words in your prompt. By using structure, you are manually directing the model's attention to the parts that matter most.

The Role of Context and Constraints

An effective prompt usually contains four elements:

-

Instruction: What you want the model to do.

-

Context: The background information the model needs.

-

Input Data: The specific piece of information you want processed.

-

Output Indicator: The format you want the answer in (e.g., "Return as a table")

The Prompting Playbook: Essential Techniques

Zero-Shot Prompting (The Direct Command)

Zero-shot prompting is a technique where a model is given a direct instruction to perform a task without any examples. It relies on the model’s pre-trained knowledge to generate responses. This approach is simple and fast but may struggle with complex or ambiguous tasks requiring more guidance or structured output.

-

When to use it: For common tasks like summarization, translation, or sentiment analysis where the pattern is already well-understood by the model.

-

The Mechanic: Use Delimiters (like triple quotes """) to separate your instructions from the data. This prevents the model from getting confused about what is an "instruction" and what is "content."

Example:

Prompt: Classify the sentiment of the following movie review as POSITIVE, NEUTRAL, or NEGATIVE.

Review: """The cinematography was breathtaking, but the plot felt hollow and the ending was rushed."""

Sentiment:

One-Shot and Few-Shot Prompting (The Pattern Matcher)

One-shot prompting: One-shot prompting provides a model with a single example of a task before asking it to perform a similar task. The model uses this example as a pattern to guide its response. It improves accuracy compared to zero-shot prompting, especially when the task requires a specific format or structure to follow.

Few-shot prompting: Few-shot prompting gives the model multiple examples demonstrating how to perform a task. By observing these patterns, the model generalizes better and produces more consistent, accurate outputs. It is especially useful for complex tasks, structured responses, or when precise control over the model’s behavior is required.This is the most powerful way to "program" a model’s behavior without writing code.

-

The Mechanic: You are using In-Context Learning. You are showing the model a pattern, and its "attention" locks onto that pattern to generate the next logical step.

-

Refining the Examples: Ensure your examples are diverse. If all your examples are short, the model will think it should only give short answers.

Simplified Example (Replacing the Pizza JSON): Instead of a complex pizza order, let's look at a simple Text-to-Emoji converter prompt :

Prompt: Convert the following phrases into a single emoji representative of the mood.

Input: I am feeling very productive today.

Output: 🚀

Input: I am so tired I could sleep for a week.

Output: 😴

Input: I just got a promotion at work!

Output: ---

Chain of Thought (CoT): The "Think Step-by-Step" Logic

LLMs are "auto-regressive," meaning they predict the next token based on the previous ones. If you ask for a final answer immediately, the model might "guess" incorrectly. Chain of Thought forces the model to generate the reasoning before the answer. By explicitly or implicitly following a step-by-step process, the model improves accuracy on complex tasks like math, logic, and analysis. This approach helps break down problems, reduces errors, and produces more transparent, interpretable outputs compared to single-step responses.

-

The Mechanic: By outputting intermediate steps, the model essentially uses its own output as a temporary memory (or "scratchpad") to work through complex logic.

-

The Benefit: It significantly reduces "hallucinations" in math, logic, and multi-step reasoning tasks.

Example:

Prompt: When I was 4 years old, my sister was 3 times my age. Now, I am 20 years old. How old is my sister? Let's think step-by-step.

Model Reasoning:

- When you were 4, your sister was 3 times your age: 4 * 3 = 12.

- The age difference is 12 - 4 = 8 years.

- You are now 20.

- Your sister is still 8 years older than you: 20 + 8 = 28. Answer = 28.

Markdown: The Blueprint for Structure

Large Language Models are trained on massive amounts of code and web content, meaning they have a deep understanding of Markdown syntax. Using Markdown is the most effective way to create a hierarchy in your prompt. using headings, lists, and code blocks improves readability and guides how the model interprets tasks. Well-structured prompts reduce ambiguity, enforce formatting, and lead to more consistent, accurate, and controllable outputs from language models.

-

The Mechanic: By using headers (#, ##), bold text (**), and bullet points, you are creating a "map" for the model. It tells the model: "This is a major section," or "This is a specific constraint."

-

Best Practice: Use # for the main task and ## for sub-sections like "Context" or "Constraints."

Example:

Language : Markdown

# Task

Analyze the following customer feedback and provide a summary.

## Constraints

* Keep the summary under 50 words.

* Focus on technical issues only.

* Use a professional tone.

## Input Data

The app keeps crashing whenever I try to upload a CSV file. It's very frustrating!

Delimiters: Drawing the Lines

Delimiters are special characters or tags used to "wrap" different parts of your prompt. They prevent a common issue called "Context Bleed," where the model confuses your instructions with the text you want it to process. using symbols like triple backticks or tags reduces ambiguity, prevents mixing of content, and helps the model correctly interpret boundaries, leading to more precise, reliable, and structured outputs

Example using XML tags:

Language: Plaintext

I want you to summarize the content within the <text_to_summarize> tags.

<text_to_summarize>

The deployment stage is the final phase of the MLOps lifecycle.

It involves pushing the model to a cloud platform like Azure or GCP

so that it can be used by end-users in real-time.

</text_to_summarize>

Summary:

Advanced Strategies: ReAct (Reason and Act)

In production-grade AI applications, the model often needs to interact with the real world (like searching Google or using a calculator). This is where the ReAct framework comes in.

ReAct (Reason and Act) combines step-by-step reasoning with action-taking. The model alternates between thinking through a problem and executing actions like querying tools or retrieving data. This approach improves problem-solving accuracy, enables dynamic decision-making, and is especially effective in complex tasks requiring reasoning, external knowledge, and iterative refinement

This turns the LLM into an Agent rather than just a text generator. It allows the model to correct its own mistakes by seeing that its initial "Observation" didn't answer the "Question."

For more details check out this github repository

Example:

model_instructions = """

Solve a question answering task with interleaving Thought, Action, Observation steps. Thought can reason about the current situation,

Observation is understanding relevant information from an Action's output and Action can be one of three types:

(1) <search>entity</search>, which searches the exact entity on Wikipedia and returns the first paragraph if it exists. If not, it

will return some similar entities to search and you can try to search the information from those topics.

(2) <lookup>keyword</lookup>, which returns the next sentence containing keyword in the current context. This only does exact matches,

so keep your searches short.

(3) <finish>answer</finish>, which returns the answer and finishes the task.

"""

example1 = """Question

Musician and satirist Allie Goertz wrote a song about the "The Simpsons" character Milhouse, who Matt Groening named after who?

Thought 1

The question simplifies to "The Simpsons" character Milhouse is named after who. I only need to search Milhouse and find who it is named after.

Action 1

<search>Milhouse</search>

Observation 1

Milhouse Mussolini Van Houten is a recurring character in the Fox animated television series The Simpsons voiced by Pamela Hayden and created by Matt Groening.

Thought 2

The paragraph does not tell who Milhouse is named after, maybe I can look up "named after".

Action 2

<lookup>named after</lookup>

Observation 2

Milhouse was named after U.S. president Richard Nixon, whose middle name was Milhous.

Thought 3

Milhouse was named after U.S. president Richard Nixon, so the answer is Richard Nixon.

Action 3

<finish>Richard Nixon</finish>

"""

example2 = """Question

What is the elevation range for the area that the eastern sector of the Colorado orogeny extends into?

Thought 1

I need to search Colorado orogeny, find the area that the eastern sector of the Colorado orogeny extends into, then find the elevation range of the area.

Action 1

<search>Colorado orogeny</search>

Observation 1

The Colorado orogeny was an episode of mountain building (an orogeny) in Colorado and surrounding areas.

Thought 2

It does not mention the eastern sector. So I need to look up eastern sector.

Action 2

<lookup>eastern sector</lookup>

Observation 2

The eastern sector extends into the High Plains and is called the Central Plains orogeny.

Thought 3

The eastern sector of Colorado orogeny extends into the High Plains. So I need to search High Plains and find its elevation range.

Action 3

<search>High Plains</search>

Observation 3

High Plains refers to one of two distinct land regions

Thought 4

I need to instead search High Plains (United States).

Action 4

<search>High Plains (United States)</search>

Observation 4

The High Plains are a subregion of the Great Plains. From east to west, the High Plains rise in elevation from around 1,800 to 7,000 ft (550 to 2,130m).

Thought 5

High Plains rise in elevation from around 1,800 to 7,000 ft, so the answer is 1,800 to 7,000 ft.

Action 5

<finish>1,800 to 7,000 ft</finish>

"""

Final Thoughts: Prompting is a Science

The difference between a "good" AI response and a "great" one is rarely luck—it’s design. By mastering these mechanics—Zero-shot for speed, Few-shot for patterns, CoT for logic, and ReAct for tools—you transition from a casual user to a true Prompt Engineer.

Remember, an LLM is only as effective as the structure you provide. If you're ready to dive deeper into the technical side of prompt design, I highly recommend exploring these official resources: